Agent-Based Social Simulation

The MAVSVille project is related to the development of a social simulation system where virtual agents representing humans evolve in an environment representing a city. The city consists of 814 environment objects which include commercial buildings, residential areas, roads, trees, benches, basketball courts and parks. Thousands of virtual agents perceive their surroundings through advanced vision, auditory and olfactory sensors. They execute complex path-finding and collision avoidance algorithms to move within the environment. In addition, they interact with other virtual agents and plan and deliberate to achieve their goals.

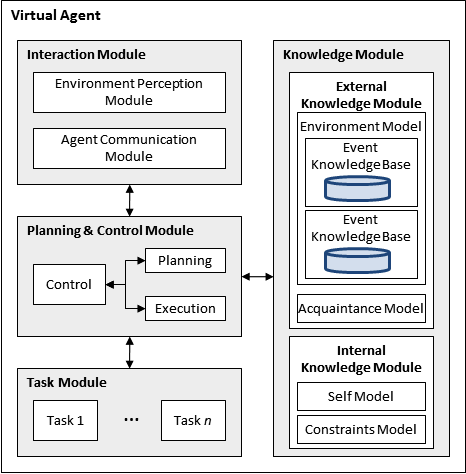

A social virtual agent is an instance of the DIVAs agent architecture that is used to model a human. As such, a social virtual agent has the following architecture:

A perception system that models human sensory systems is critical for simulating virtual agents evolving in open environments (i.e., inaccessible, non-deterministic, dynamic, continuous) . In order to formulate plans and make decisions, virtual agents need to obtain visual environmental information. Techniques to acquire this information have two main goals: 1) provide accurate results, and 2) execute as quickly as possible. These goals conflict with each other since increasing the accuracy of vision techniques results in greater processing time.

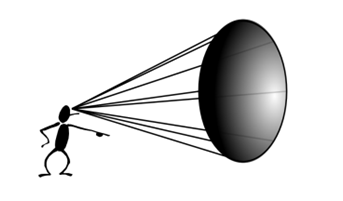

Our virtual agent vision perception algorithms approximate realistic vision while maintaining low execution time. Similarly to human vision, in our approach, each agent’s perception module processes environment information utilizing the vision sensor to determine exactly what the agent is able to see within a vision cone (the human eye takes in light in the shape of an increasing cone).

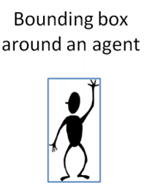

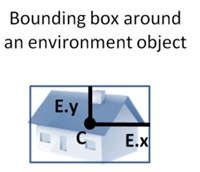

The vision sensor executes two tests: the vision test and the vision obstruction test. These tests make use of the agent vision cones in combination with bounding boxes around environment objects in order to efficiently determine which objects are seen. After the vision tests are complete, the information regarding seen objects is stored in the agent’s knowledge module. The accuracy and efficiency of our vision techniques have been verified on multi-agent scenarios involving thousands of agents executing in simulated real-time.

Most Multi-Agent Based Simulation Systems (MABS) have tackled the challenge of perception by providing agents with global or local environmental knowledge. Even though these approaches are straightforward and easy to implement, they are unfit to simulate realistic scenarios. To date, most MABS that implement some form of perception have focused heavily on a single sense, vision. Since the integration of other senses such as smell or hearing is almost non-existent within MABS, the combination of perception data has drawn very limited attention.

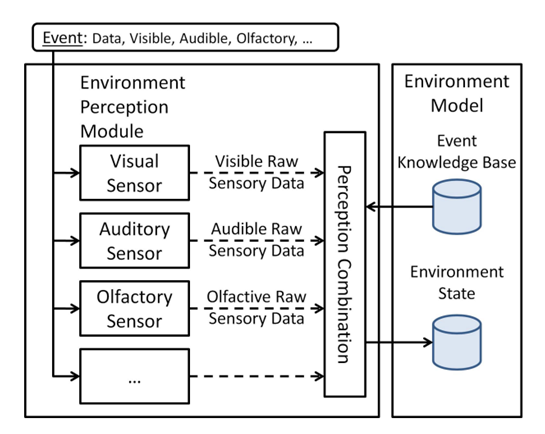

We have developed an agent perception module which integrates vision, auditory and olfactory sensors. The various sensors parameters can be configured individually for each agent and can be modified at execution time. The perception combination algorithm combines the sensory data collected from the various sensors to produce knowledge.

Agent Perception Module

The perception module receives as input the event data which includes information about the event as well as its sensor interfaces. For example, an explosion includes audible, visible, olfactory, and tactile interfaces, whereas a siren only includes an audible interface. Once a sensor receives event data, it determines whether the information is perceivable by examining the interfaces. Using the agent current state and the raw sensory data, the sensor executes a specialized algorithm to determine what is actually perceived by the agent.