Connected and Autonomous Vehicles

In recent years, the automotive industry has heavily invested in Connected and Autonomous Vehicles (CAV). CAVs are vehicles that communicate with each other and the roadside infrastructure through advanced communication technologies, i.e., vehicle-to-vehicle (V2V) and vehicle-to-infrastructure (V2I). Additionally, CAVs are equipped with various sensors that allow them to perceive their surroundings and the road network.

The purpose of this research is to address various applications for connected and autonomous vehicles managed by onboard agent-based systems.

An interesting application of Connected and Autonomous Vehicles (CAVs) is driverless convoying whereby a group of CAVs travels together for mutual support and information sharing. Convoy driving has several benefits: it improves roadway safety, decreases traffic congestion, and reduces energy consumption. However, the formation of convoys presents several challenges: coalitions can be formed and dissolved and their structure changed dynamically. In addition, a convoy’s lifespan can range from a few seconds to several hours.

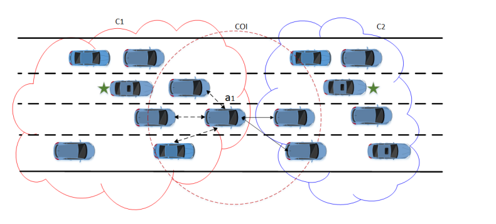

In our research, we consider CAVs managed by on-board agent-based systems driving on a highway. We propose an approach for dynamic Coalition Structure Generation (CSG) that we call connected vehicle Coalition Structure Generation (cvCSG).

In the context of our work, cvCSG aims at partitioning the set of CAVs into disjoint coalitions, each managed by a leader.

cvCSG is based on the following premises:

- There is no central processing node for the vehicle system. Leaders are elected and act as centers of control for their respective coalitions only.

- There is no central node that has global knowledge about the traffic. Both leaders and member-CAVs acquire relevant information about their surroundings through V2V communication.

- Communication is achieved through single-hop or multi-hop routing schemes.

- The traffic environment is assumed to be dynamic, i.e., the network topology continuously changes and the effect of these changes is not known in advance.

A CAV is defined by its ID and a state vector including the vehicle’s position, its orientation, its velocity, and its sensor range.

cvCSG is a two-phased approach which aims at finding efficient sub-optimum solutions rapidly.

Two parameters are considered in the definition of the characteristic function: the coalition size and the coalition member’s distances.

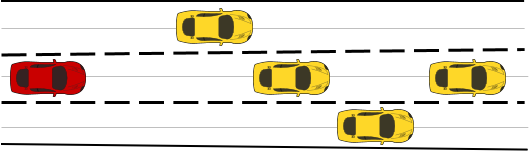

Connected and Autonomous Vehicles (CAVs) in a coalition continuously exchange information and cooperate to make rewarding decisions for the coalition. In case a misbehaving vehicle is detected by one of the coalition’s CAVs, the vehicle alerts all coalition members about the emergency. The coalition members must cooperatively plan and find non-conflicting actions to avoid collisions with the misbehaving vehicle when possible.

The image above depicts an on-road scenario including a coalition of CAVs and a misbehaving vehicle on a highway.

Decision making approach

We propose a two-step decision making approach.

- In the first step, each CAV executes the CAV decision-making algorithm to derive individual prioritized mitigation action-plans. An action-plan is a set of consecutive CAV actions (such as lane change). Each CAV sends its prioritized action-plans to coalition leader.

- In the second step, the coalition leader selects non-colliding (if possible) final action plan for each CAV.

For the first step, we formulate the individual CAV decision-making problem as a Multi-agent Markov Decision Process (MMDP) and solve it using reinforcement learning algorithms based on Monte Carlo Tree Search (MCTS).

Approximate Simultaneous Move (ASM) algorithm

Monte Carlo Tree Search (MCTS) does not scale well with the number of CAVs, as the MCTS tree size grows exponentially with the number of CAVs.

We propose Approximate Simultaneous Move (ASM), an MCTS-based algorithm that increases the scalability of the MCTS algorithm. ASM reduces MCTS tree size exponentially and works for a larger number of CAVs than other state-of-the-art CAV cooperative decision-making approaches. ASM reduces the branching factor in the MCTS tree by a factor equal to the number of CAVs.

AdaptIve Reward Cooperative Action Planning (AirCap) algorithm

ASM, as well as other MCTS-based CAV decision-making algorithms, use a fixed reward function for all coalition states. However, a fixed reward for all coalition states gives suboptimal results.

We propose AdaptIve Reward Cooperative Action Planning (AirCap), an MCTS-based algorithm that uses a dynamic reward function for different coalition states. To achieve this, we propose to update the reward function weights for each action, based on the resultant coalition state of that action. AirCap is able to significantly improve upon ASM, which uses fixed reward function weights, and performs much better than the state-of-the-art centralized and decentralized algorithms.

Dynamic Coalition Structure Generation for Connected and Autonomous Vehicles

Approximate Simultaneous Move (ASM) algorithm for cooperative collision avoidance

AdaptIve Reward Cooperative Action Planning (AirCap) algorithm for cooperative collision avoidance (You can notice a delay of 5 seconds when CAVs are executing AirCap to find action plans.)